This is the result of our team Humans x AI x SOUND for the Ars Electronica Hackathon 2020.

Face tracking emotions into music

Ars Electronica Festival 2020 addresses the current feeling of uncertainty on how the covid-19 crisis will shape us as individuals, as societies, as humanity. The festival focuses on two tensions: AUTONOMY and DEMOCRACY as well as TECHNOLOGY and ECOLOGY.

Recent advances in AI have put within reach a world where art can be created and performed entirely by algorithms. In a series of panels, workshops, and live performances, AIxMUSIC explores the fine line and interactions between the artist and the machine.

For the occasion of the first online Ars Electronica Festival, Ars Electronica hosted its first International AIxMusic Hackathon as part of the AIxMusic Festival 2020. The hackathon took place online during the Ars Electronica Festival from 9-13 September 2020; presentations were streamed live on Ars Electronica New Worlds Sunday, September 13th 2020.

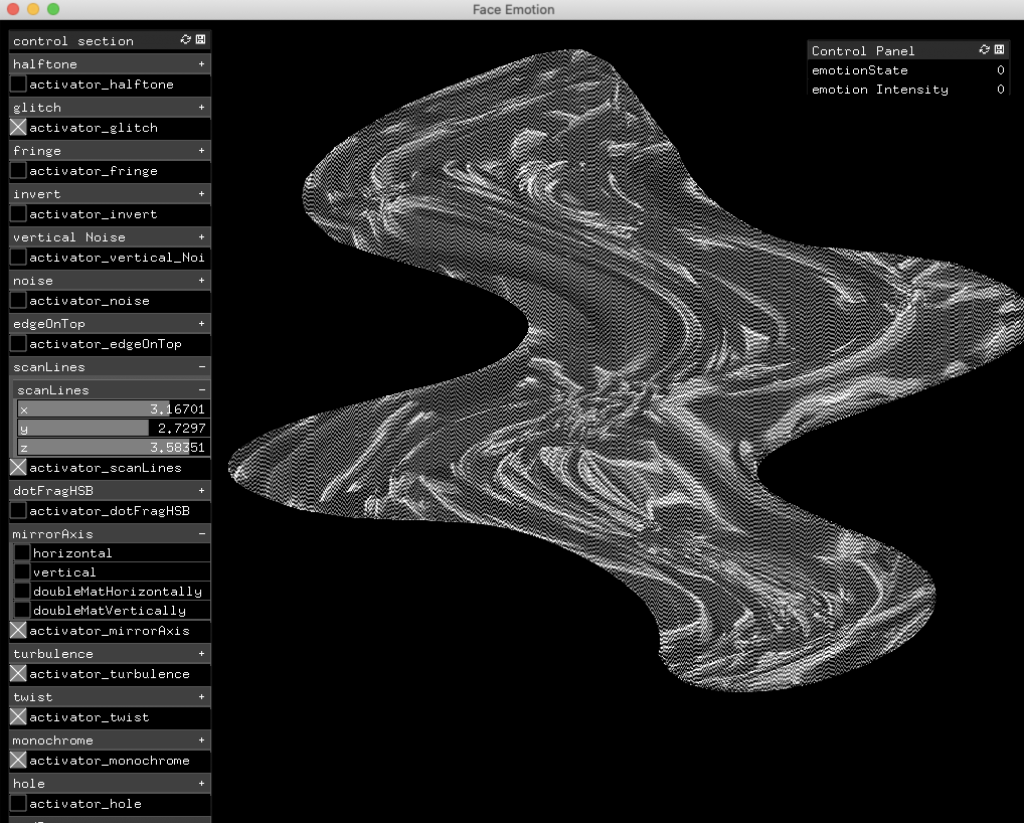

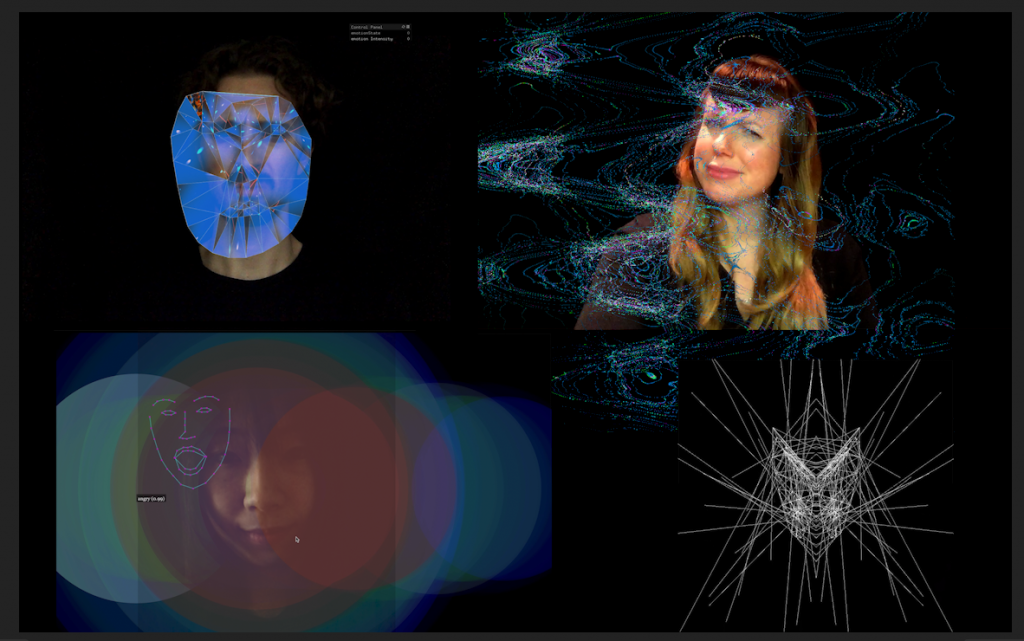

Group 2: AIxMUSICxHUMAN. The team explored designing user interactions when humans and machine learning models are together in the musical loop. Team members Amy Karle, Sergio Lecuona, Jing Dong, Pierre Tardif, Suyash Joshi considered how to interact through digital communication in ways that foster understanding, experimenting with inputting emotions through facial tracking to output sound and visuals.

- Amy Karle Amy Karle 🔮

- Sergio Lecuona sergiolecuona 💽

- Jing Dong techjing.art 🐬

- Pierre Tardif codingcoolsh.it 💾

- Suyash Joshi 📱

Ars Electronica AIxMusic Online Hackathon: Final Presentations